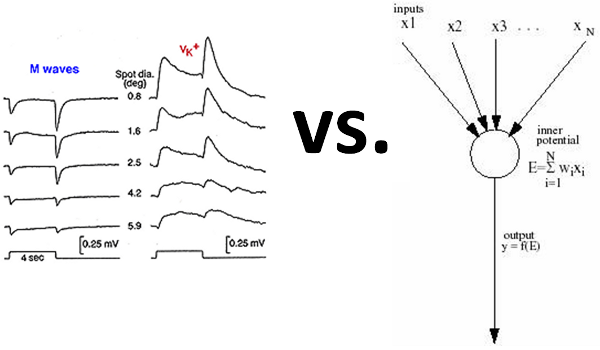

Neuromorphic vs. Neural Net

| Brain | Artificial Neural Network |

| Asynchronous | Global synchronous clock |

| Stochastic | Deterministic |

| Shaped waves | Scalar values |

| Storage and compute synonymous | Storage and compute separate |

| Training is a Mystery | Backpropagation |

| Adaptive network topology | Fixed network |

| Cycles in topology | Cycle-free topology |

The diagram of biological brain waves comes from med.utah.edu and the diagram of an artificial neural network neuron comes from hemming.se

The table above lists the differences between a regular artificial neural network (feed-forward non-spiking, to be specific) and a biological brain. An artificial neural network (ANN) is so far in architecture and function from a biological brain that attempts to simulate a brain in silicon go by a different term altogether: neuromorphic

In the table above, if the last row is modified to allow a neural network to have cycles in its network topology, then it becomes known as a recurrent neural network -- still not quite neuromorphic. But by also modifying the first row of the table to remove the global synchronous clock from neural networks, IBM's TrueNorth chip announced August 2014 claims the neuromorphic moniker. (Asynchronous neural networks are also called spiking neural networks (SNN), but TrueNorth combines the properties of both RNNs and SNNs.)

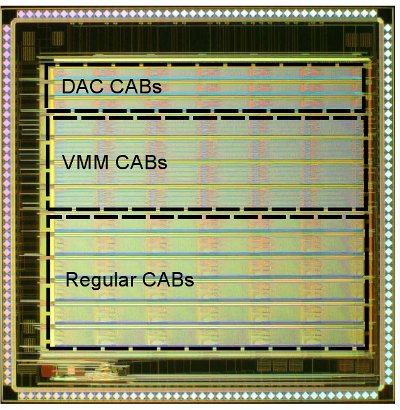

The TrueNorth chip sports one million neurons and 256 million synapses. But you can't buy one. The closest you can come today perhaps is to use an FPAA, a field-programmable analog array, the analog version of an FPGA. But FPAAs haven't scaled nearly as highly as FPGAs. The largest FPAA is the RASP 2.9. The image of its die below comes from a thesis Contributions to Neuromorphic and Reconfigurable Circuits and Systems.

It has only 78 CABs (Computational Analog Block), contrasted to the largest FPGAs which have over one million logic elements. Researchers in 2013 were able to simulate 18 neuromorphic neurons with this RASP 2.9 analog FPAA chip.

The human brain has 100 billion neurons, so it would hypothetically take 100,000 TrueNorth chips to approach equivalence, based on number of neurons alone. Of course, the other factors, in particular the variable wave shape of biological neurons, would like put any TrueNorth simulation of a brain at a great disadvantage. A lot more information can be carried in a wave shape than in a single scalar value.In the diagram at the top, the different wave shapes resulted from showing an animal lights spots of different diameters. An artificial neural network, in contrast, would require N number of output neurons to represent N different distinct diameters.

But with an analog FPAA, perhaps neurons that support wave shapes could be simulated, even if for now one may be limited to a dozen or so neurons. But then there is the real mystery: how a biological brain learns, and by extension how to train a neuromorphic system.